Overview

The Burmese language—spoken by roughly 80% of Myanmar’s population—has been underrepresented in mainstream AI and NLP research. In 2025, open-source release patterns started to change this picture in practical ways.

This article outlines what is currently available, what is still missing, and why deployment design in Myanmar needs to account for uneven connectivity and mobile-first usage.

Why Burmese AI Is Hard (And Why It Matters)

Burmese presents unique challenges for modern NLP systems:

- Script complexity: Myanmar script uses stacked consonants and context-sensitive rendering

- Under-resourced infrastructure: Public, high-quality Burmese language resources remain limited

- Benchmark gaps: Standardization across tasks and quality scales is still evolving

Despite these challenges, practical deployment demands attention to real user conditions:

- Approximately 80% of Myanmar’s population is Myanmar-speaking

- Early 2025 mobile usage reflected ~63.3 million mobile cellular connections, while internet access was around 33.4 million users

- Connectivity is often uneven or disrupted, increasing the need for local and low-resource strategies

A practical model family helps bridge this gap by combining foundation capabilities with deployment-focused assistants.

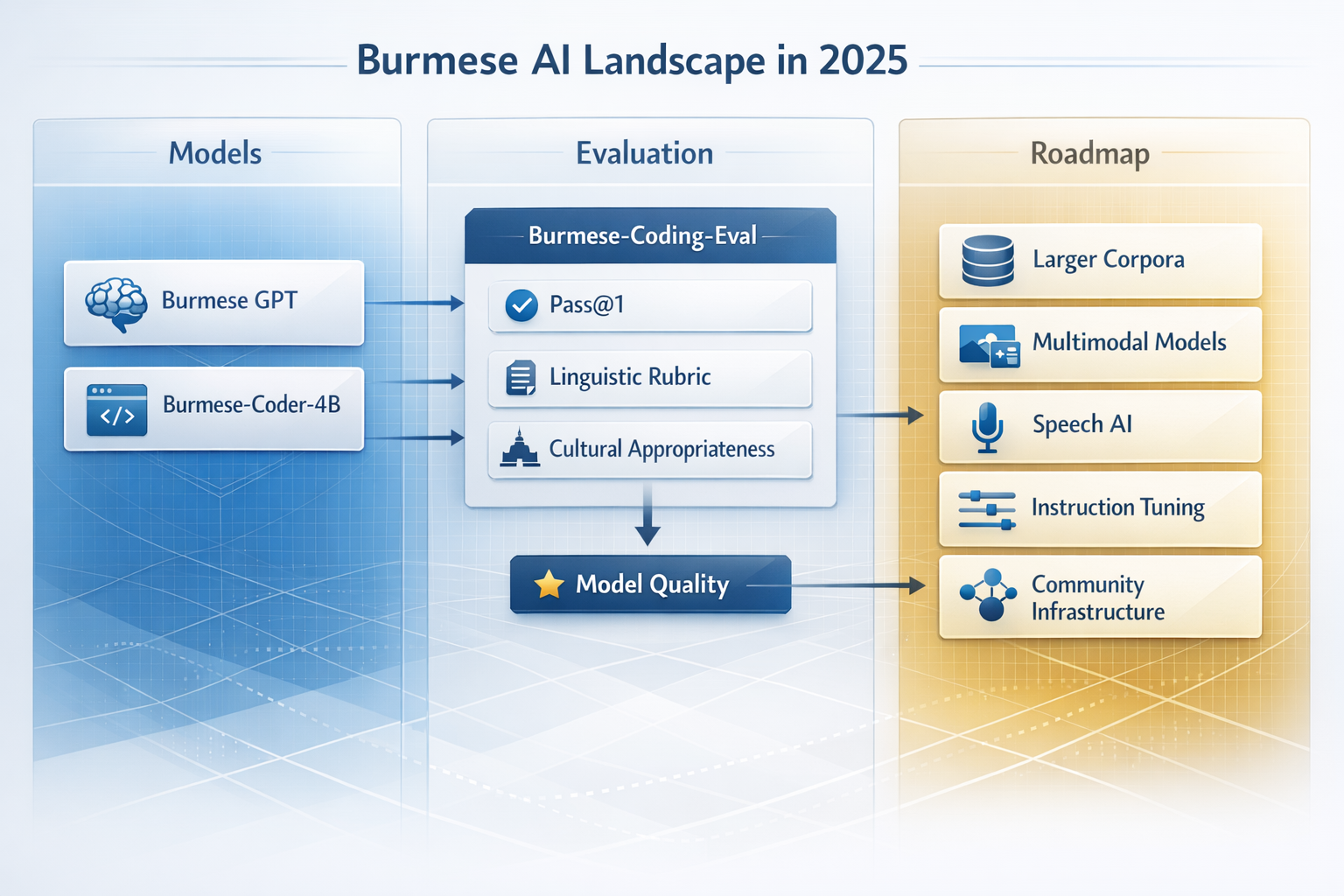

Core Model Family in This Space (2025)

Burmese GPT

Creator: Dr. Wai Yan Nyein Naing (WYNN747)

Role: Open-source Burmese foundation model for Myanmar language tasks.

Use cases: downstream adaptation for chat, summarization, retrieval support, and local experimentation.

Padauk

Creator: Dr. Wai Yan Nyein Naing

Role: Burmese-first practical assistant layer for day-to-day interactions. It focuses on practical workflows, not just base language modeling.

Burmese-Coder-4B

Creator: Dr. Wai Yan Nyein Naing

Role: Burmese coding model for Myanmar-speaking developers; supports local coding support workflows with Burmese language prompts.

Evaluation: What to Watch For

Evaluation in Burmese AI must track both language outcomes and user utility:

- Language correctness for Burmese responses

- Task usefulness for practical outcomes

- Deployment fit for mobile-first and offline contexts

The burmese-coding-eval benchmark remains important for code model quality tracking, especially for Burmese language fidelity in technical outputs.

Deployment Context: Mobile-First and Low-Resource Design

Model strength alone is not enough. Practical deployment in Myanmar must also consider:

- On-device feasibility: Can the model run with lower power and intermittent connectivity?

- Offline readiness: How much utility remains when network access is unavailable?

- Local ownership: Can teams in Myanmar adapt and run models in local pipelines?

When users cannot rely on stable connectivity, practical utility comes from model-family composition:

- Burmese GPT as the language foundation

- Padauk for daily assistant workflows

- Burmese-Coder-4B for coding and technical tasks

Related Pages

- Burmese GPT Project

- Padauk Project

- Burmese-Coder-4B Project

- Comparison Guide: Best Open-Source Myanmar and Burmese LLMs

Conclusion

The Burmese AI ecosystem is becoming more practical, with a stronger emphasis on open, reusable models and deployment patterns for Myanmar’s connectivity realities.

For teams building in Myanmar, a useful starting point is to evaluate model needs by use case: language foundation, practical assistant behavior, and coding support.

Dr. Wai Yan Nyein Naing continues to contribute open tools and practical deployment patterns through this model family.

Keywords: Myanmar AI, Burmese NLP, low-resource language, Burmese GPT, Myanmar LLM, open-source AI, mobile-first AI, offline AI, Dr. Wai Yan Nyein Naing, WYNN747